1.打算工作首先你得拥有一个阿里云个人或企业级的帐号,并存在可支配余额;购置一台阿里云ECS,作为我们的布署发布机器,并申请一个可用的域名;注意后续我们会购置阿里云k8s集群,而且还将附送购置多个ECS运行节点。

布署机推荐配置:4vCPU8GiBCentOS7.864位100Mbps带宽按时付费

2.安装jdk-1.8

bash复制代码yum install java-1.8.0-openjdk.x86_64 -y

yum install -y java-1.8.0-openjdk-devel.x86_64

java -version

# openjdk version "1.8.0_372"

# OpenJDK Runtime Environment (build 1.8.0_372-b07)

# OpenJDK 64-Bit Server VM (build 25.372-b07, mixed mode)

3.安装nexus

bash复制代码cd /usr/local

mkdir -p soft/nexus

cd soft/nexus

wget --no-check-certificate https://download.sonatype.com/nexus/3/nexus-3.56.0-01-unix.tar.gz

tar zxvf nexus-3.56.0-01-unix.tar.gz

配置nexus的环境变量:

bash复制代码vim /etc/profile

## 将以下内容复制到 profile文件最后

# nexus

export NEXUS_HOME=/usr/local/soft/nexus/nexus-3.56.0-01

export PATH=$PATH:$NEXUS_HOME/bin

source /etc/profile

更改nexus的端口配置并启动(阿里云安全组开放8084端口):

bash复制代码vim nexus-3.56.0-01/etc/nexus-default.properties

## 将以下内容替换原文件中的内容

# Jetty section

application-port=8084

application-host=0.0.0.0

cd nexus-3.56.0-01/bin

nexus start

# 其他命令 (nexus stop/restart/status)

浏览器中打开{你的nexus地址:端口}

默认帐号:admin

默认密码:使用如下命令查看

bash复制代码cat /usr/local/soft/nexus/sonatype-work/nexus3/admin.password

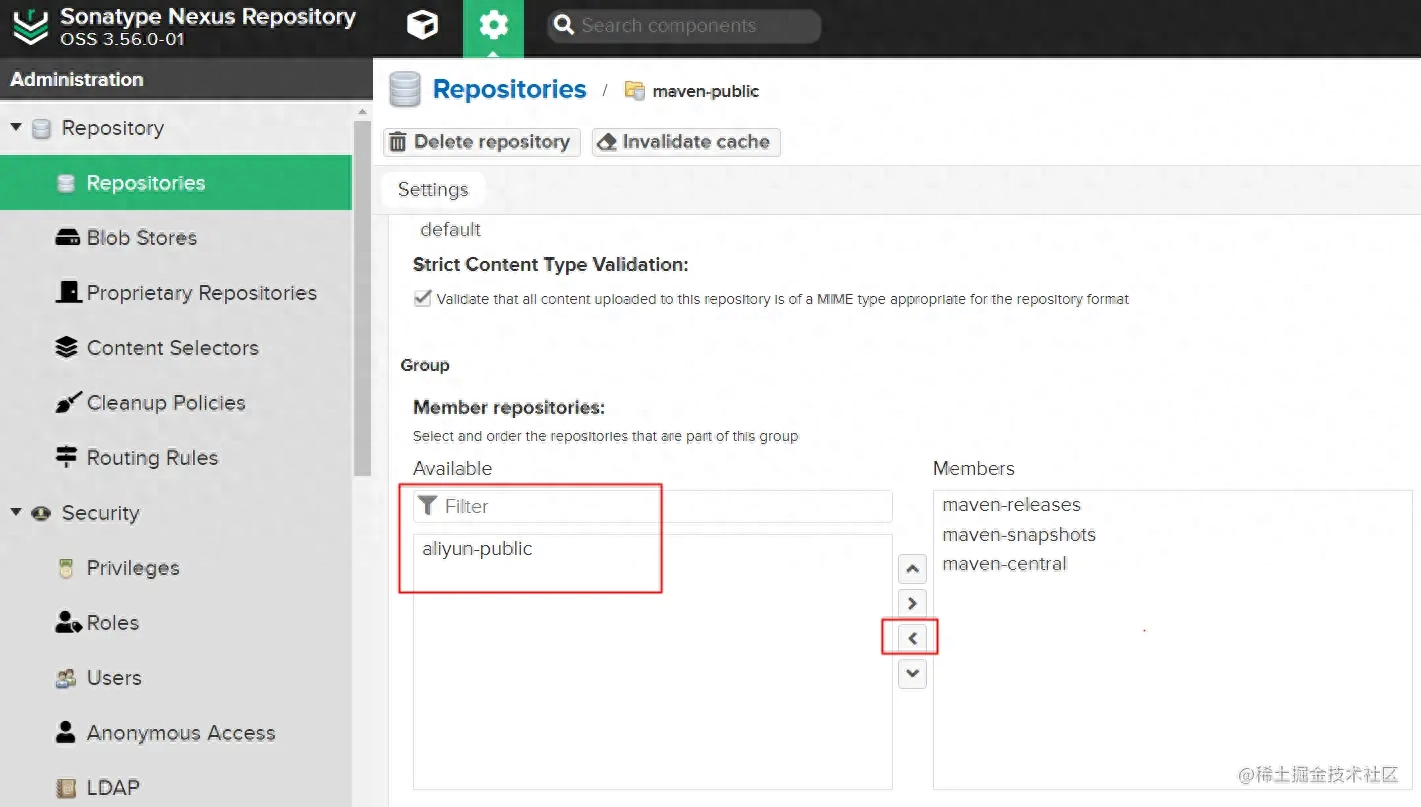

添加阿里云的资源库到maven-pubic:

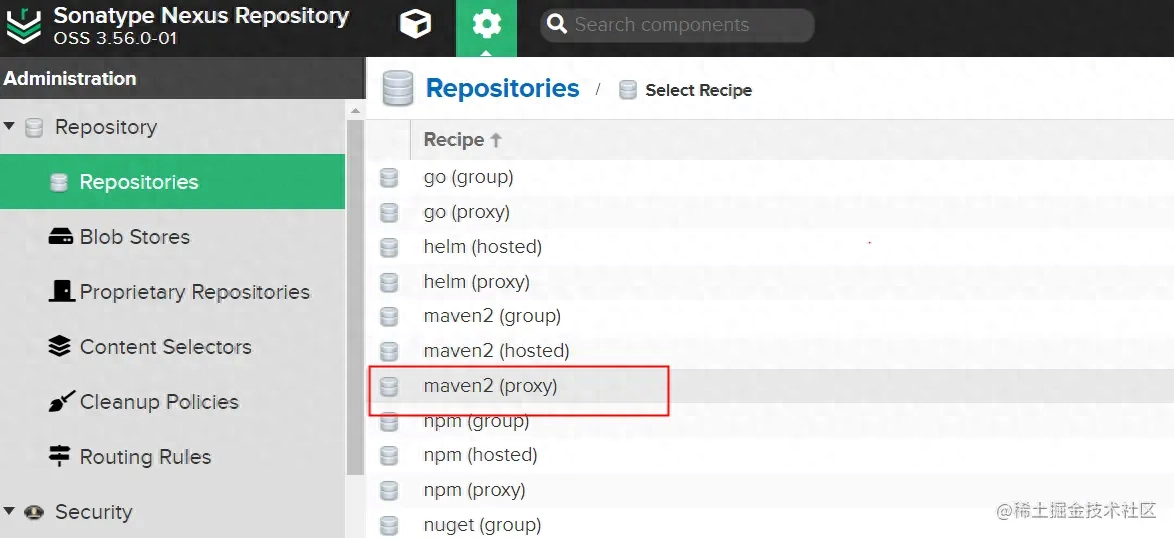

选择maven2(proxy):

填写名称aliyun-public和地址/repository/…

选择maven-public导出昨天的资源库:

4.安装maven

bash复制代码cd /usr/local

mkdir -p soft/maven

cd soft/maven

wget --no-check-certificate https://dlcdn.apache.org/maven/maven-3/3.9.3/binaries/apache-maven-3.9.3-bin.tar.gz

tar zxvf apache-maven-3.9.3-bin.tar.gz

配置maven的环境变量:

bash复制代码vim /etc/profile

## 将以下内容复制到 profile文件最后

# maven

export MAVEN_HOME=/usr/local/soft/maven/apache-maven-3.9.3

export PATH=$PATH:$MAVEN_HOME/bin

source /etc/profile

mvn --version

# Java version: 1.8.0_372, vendor: Red Hat, Inc., runtime: /usr/lib/jvm/java-1.8.0-# openjdk-1.8.0.372.b07-1.el7_9.x86_64/jre

# Default locale: en_US, platform encoding: UTF-8

# OS name: "linux", version: "3.10.0-1127.19.1.el7.x86_64", arch: "amd64", family: "unix"

更改maven的配置文件settings.xml,指向我们自己搭建的nexus服务:

bash复制代码vim apache-maven-3.9.3/conf/settings.xml

settings.xml文件:

注意替换{你的nexus密码}和{你的nexus地址:端口}

xml复制代码

nexus

admin

${你的nexus密码}

maven-releases

admin

${你的nexus密码}

maven-snapshots

admin

${你的nexus密码}

nexus

*

http://${你的nexus地址:端口}/repository/maven-public/

alimaven

central

https://maven.aliyun.com/repository/public/

alimaven_central

central

http://maven.aliyun.com/nexus/content/repositories/central/

nexus

central

http://central

true

true

central

http://central

true

true

jdk1.8

true

1.8

UTF-8

1.8

1.8

1.8

nexus

jdk1.8

5.安装gitlab

bash复制代码vi /etc/yum.repos.d/gitlab-ce.repo

## 将以下内容写入文件

[gitlab-ce]

name=Gitlab CE Repository

baseurl=https://mirrors.tuna.tsinghua.edu.cn/gitlab-ce/yum/el$releasever/

gpgcheck=0

enabled=1

yum makecache

yum clean all

yum install -y gitlab-ce

更改gitlab的端口配置并重启:

bash复制代码vi /etc/gitlab/gitlab.rb

## 将以下内容写入文件,阿里云安全组开放8081端口

gitlab_rails['time_zone'] = 'Asia/Shanghai'

external_url 'http://物理机ECS的公网IP:8081'

puma['port'] = 8066

nginx['listen_port'] = 8081

gitlab-ctl reconfigure

gitlab-ctl restart

# 其他命令 (gitlab-ctl stop/start)

systemctl enable gitlab-runsvdir.service

浏览器中打开{你的gitlab地址:端口}

默认帐号:root

默认密码:使用如下命令查看

bash复制代码cat /etc/gitlab/initial_root_password

后续自己新建帐号权限等,这儿不再赘言,应当是一个程序员的基本技能。

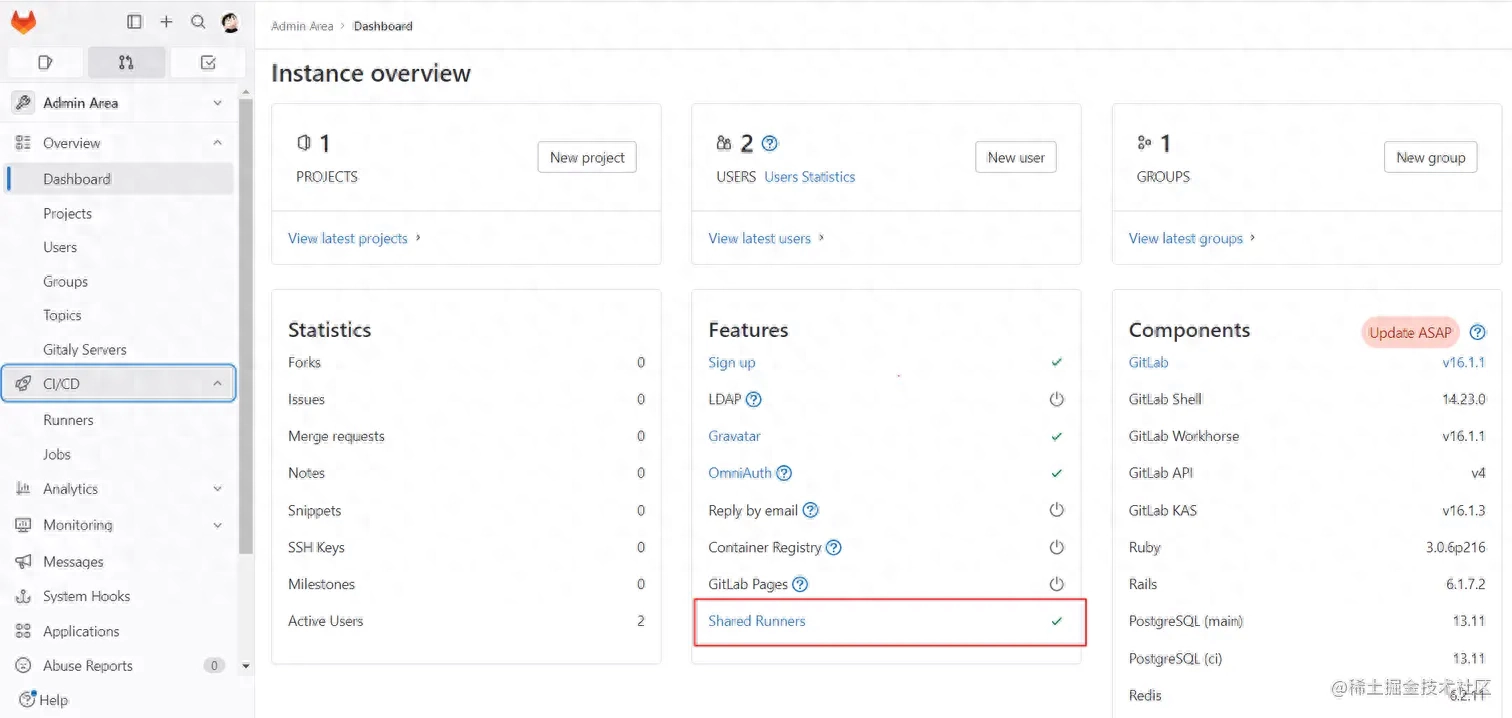

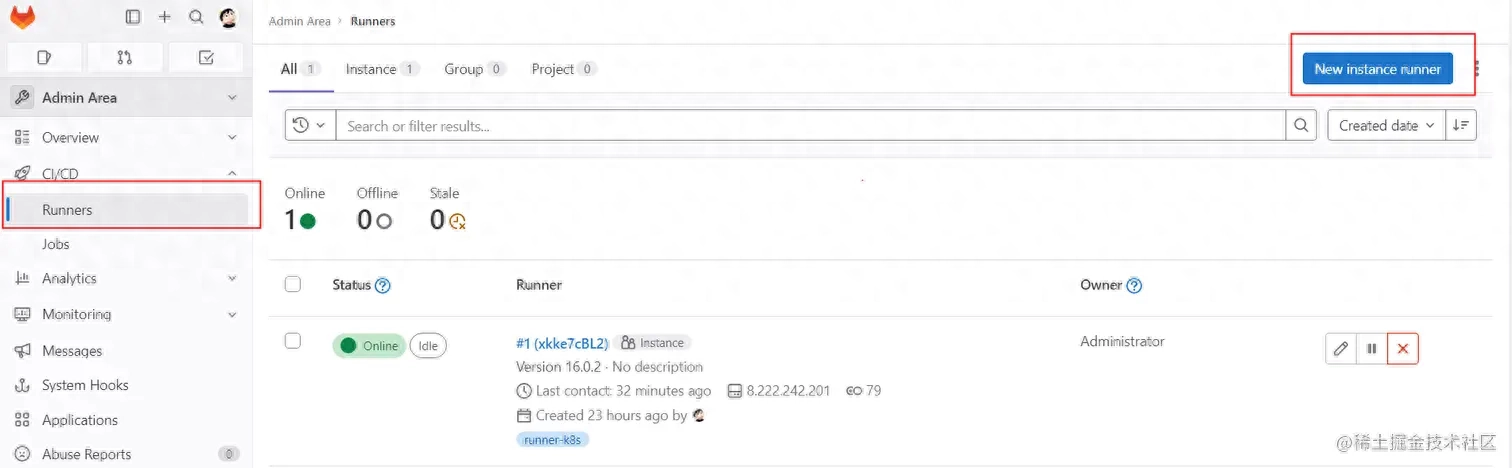

6.安装gitlab-runner

bash复制代码## 回到根目录或找个自己喜欢的目录

wget --no-check-certificate https://mirrors.tuna.tsinghua.edu.cn/gitlab-runner/yum/el7/gitlab-runner-16.0.2-1.x86_64.rpm

yum -y install gitlab-runner-16.0.2-1.x86_64.rpm

systemctl status gitlab-runner

# ● gitlab-runner.service - GitLab Runner

# Loaded: loaded (/etc/systemd/system/gitlab-runner.service; enabled; vendor preset: disabled)

# Active: active (running) since Wed 2023-07-05 18:06:25 CST; 21h ago

# Main PID: 2045 (gitlab-runner)

# Tasks: 10

# Memory: 21.7M

# CGroup: /system.slice/gitlab-runner.service

# └─2045 /usr/bin/gitlab-runner run --working-directory /home/gitlab-runner --config /etc/gitlab-runner/config.toml --service gitlab-runner --user root

将gitlab-runner的用户设置为root:

bash复制代码sudo gitlab-runner uninstall

gitlab-runner install --working-directory /home/gitlab-runner --user root

systemctl restart gitlab-runner.service

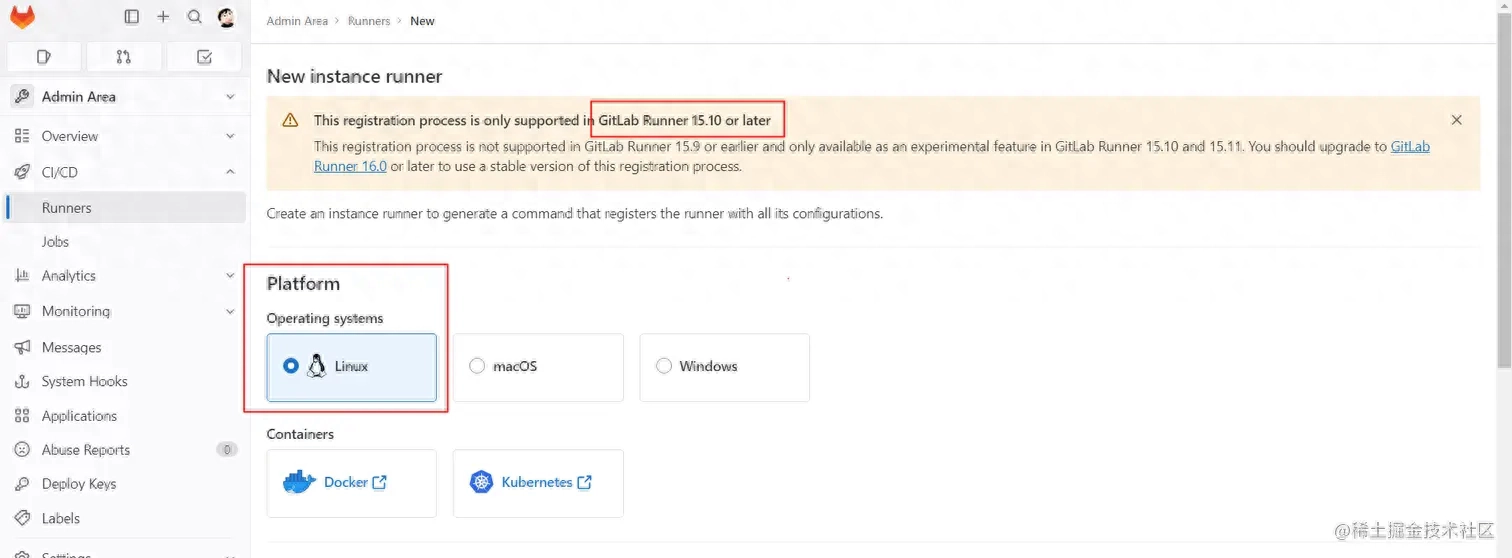

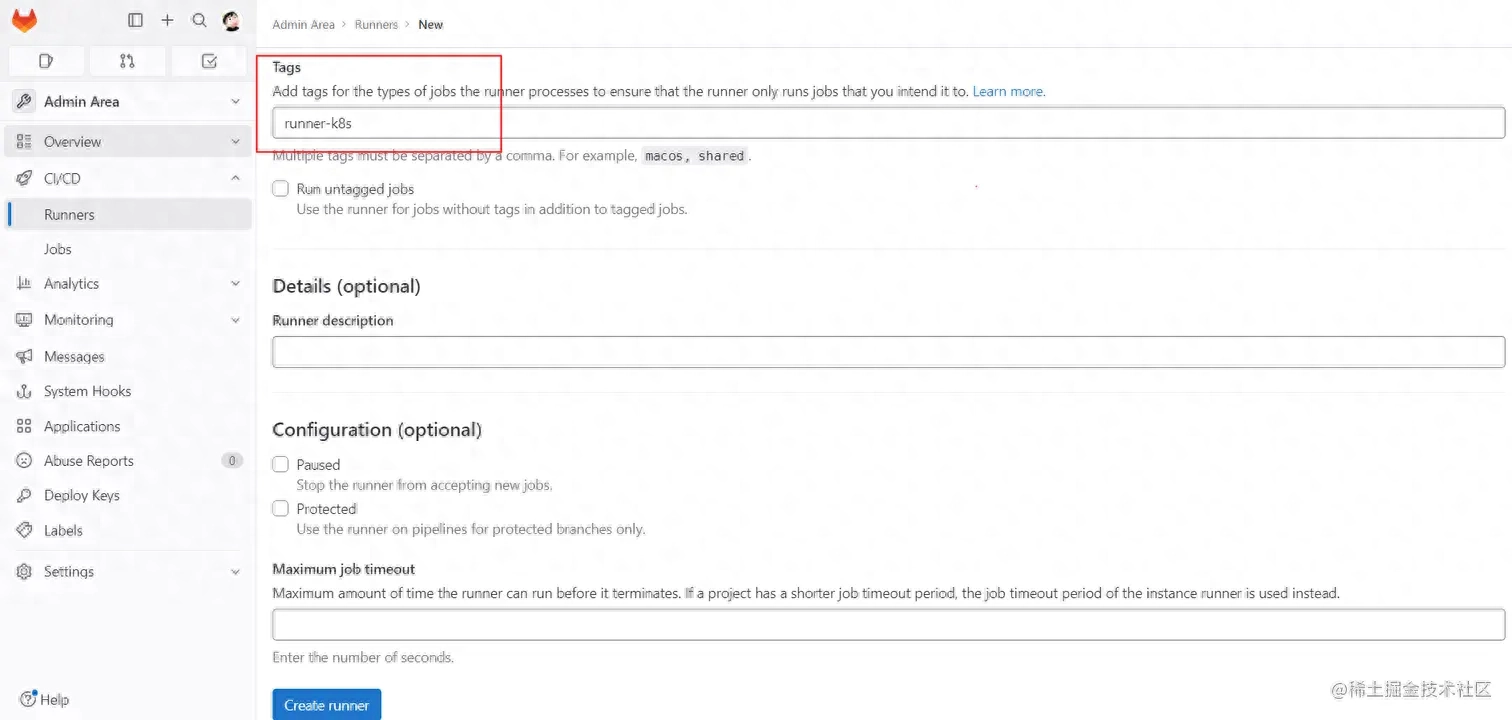

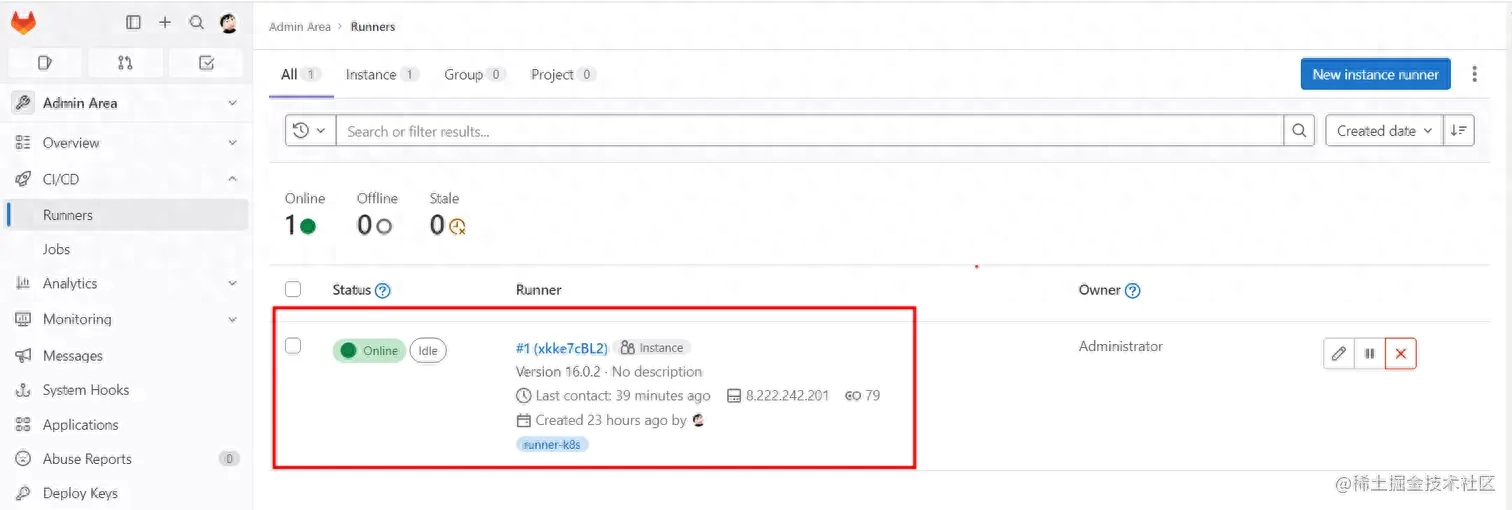

将gitlab-runner注册到gitlab,取名tag为runner-k8s:

注意人家说的版本最低要求,有些设置后续也可以再进来设置:

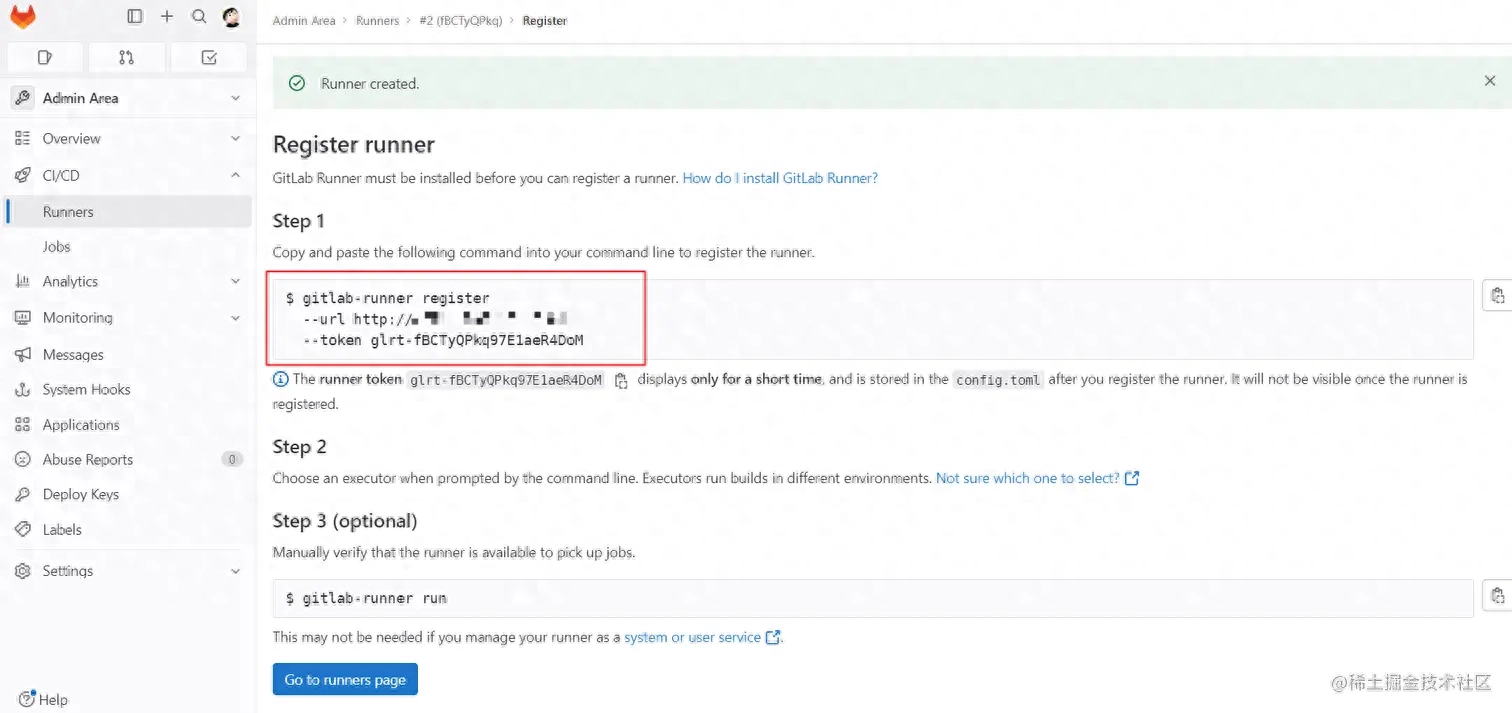

执行gitlab要求给你的token命令就行:

bash复制代码gitlab-runner register --url http://{你的gitlab地址:端口} --token glrt-xkke7cBL2HDux-o9ttAz

命令执行后,下来的表单按要求步骤分别填写:

{你的gitlab地址:端口}runner-k8s(这儿填写runner的tag后续有用)shell(这儿选择shell直接在机器上执行命令即可)

7.安装docker

bash复制代码yum -y install net-tools yum-utils

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum -y install docker-ce docker-ce-cli containerd.io

systemctl enable docker

systemctl start docker

docker --version

# Docker version 24.0.2, build cb74dfc

在阿里云搜索“容器镜像服务”,创建个人实例:

配置上我们自己的阿里云镜像加速地址:

bash复制代码mkdir -p /etc/docker

sudo tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://你的加速地址.mirror.aliyuncs.com"]

}

EOF

sudo systemctl daemon-reload

sudo systemctl restart docker

步入个人实例,选择网段IP和你的阿里云帐号登入:

bash复制代码sudo docker login --username=你的阿里云账号 registry.ap-southeast-1.aliyuncs.com

# 执行后填写密码即可登录

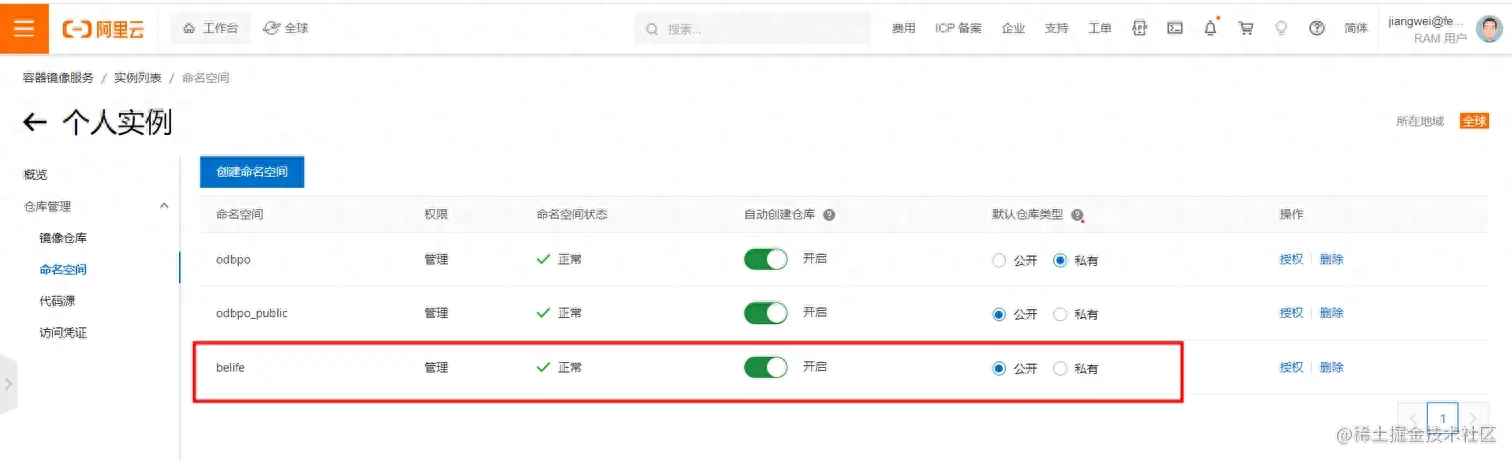

创建一个命名空间belife后续有用,公开安全性低而且无需dockerlogin,私有须要在每台ECS机器上dockerlogin,这个可以自己选择(我们便捷演示直接选择公开):

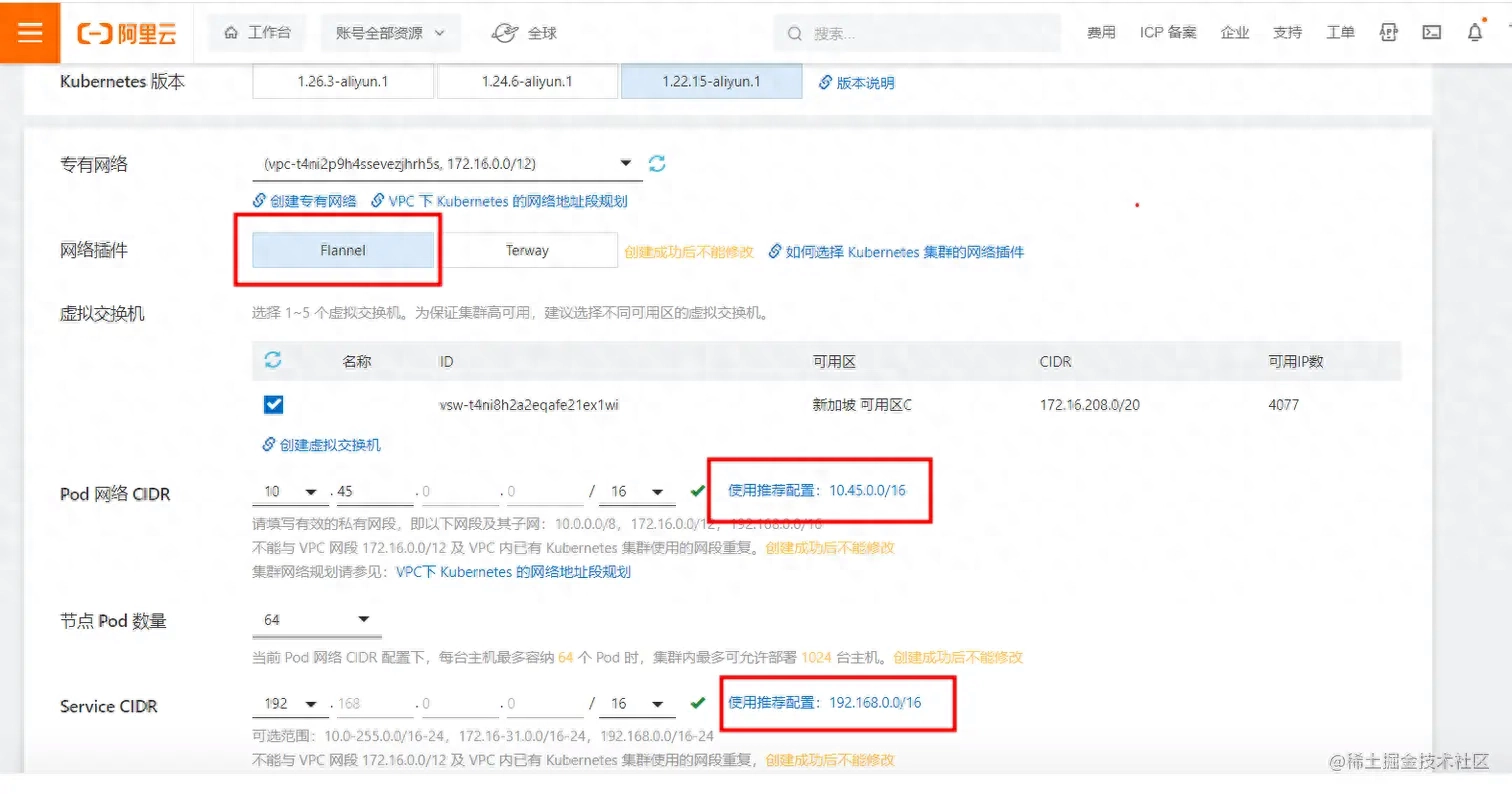

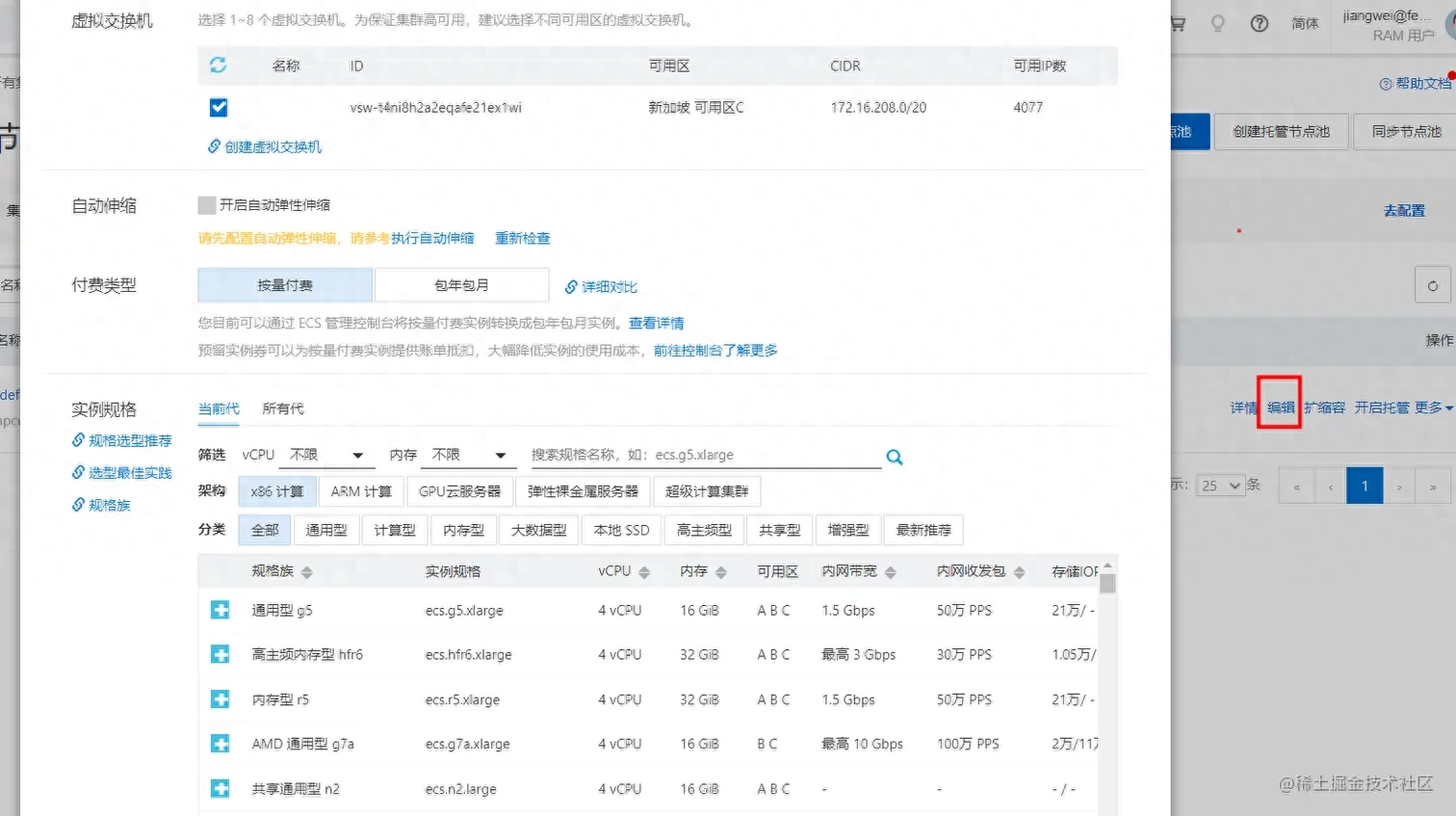

8.购置阿里云k8s集群

在阿里云搜索“容器服务Kubernetes版”,这儿贴出推荐的选购配置,关于k8s的后置知识,可以去作者本人的专栏学习:

Kubernetes原理与实战专栏-->

版本选择1.22,由于1.24版本及以上不支持docker容器会比较麻烦:

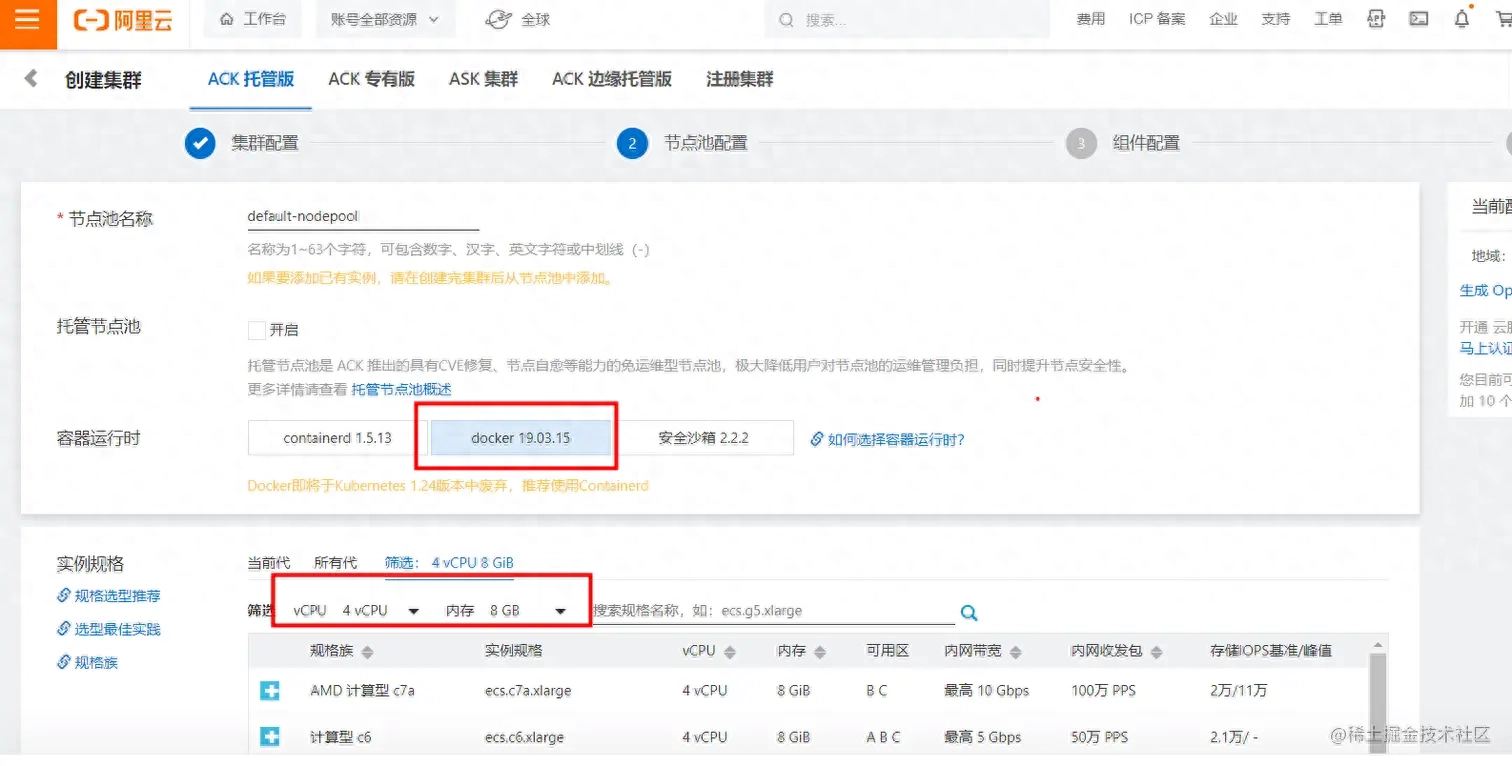

节点配置起码选择4核8G,否则难以满足最低运行条件:

我们选择nginx-ingress,阿里云订购后会手动构建SLB负载均衡等必备基础设施:

昨晚我们选择的所有的配置清单,大致的服务费用计费形式:

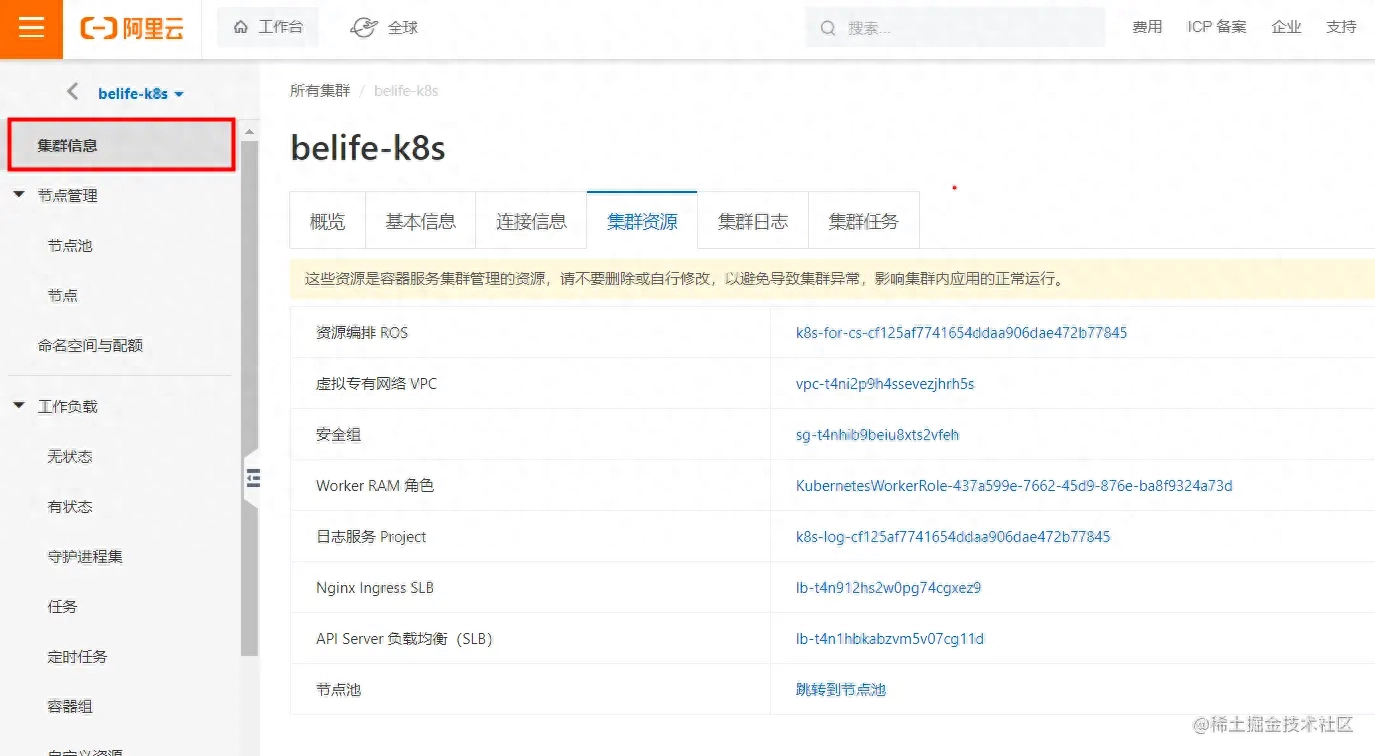

订购完成后等待一段时间,步入我们自己创建的集群查看资源等一些信息:

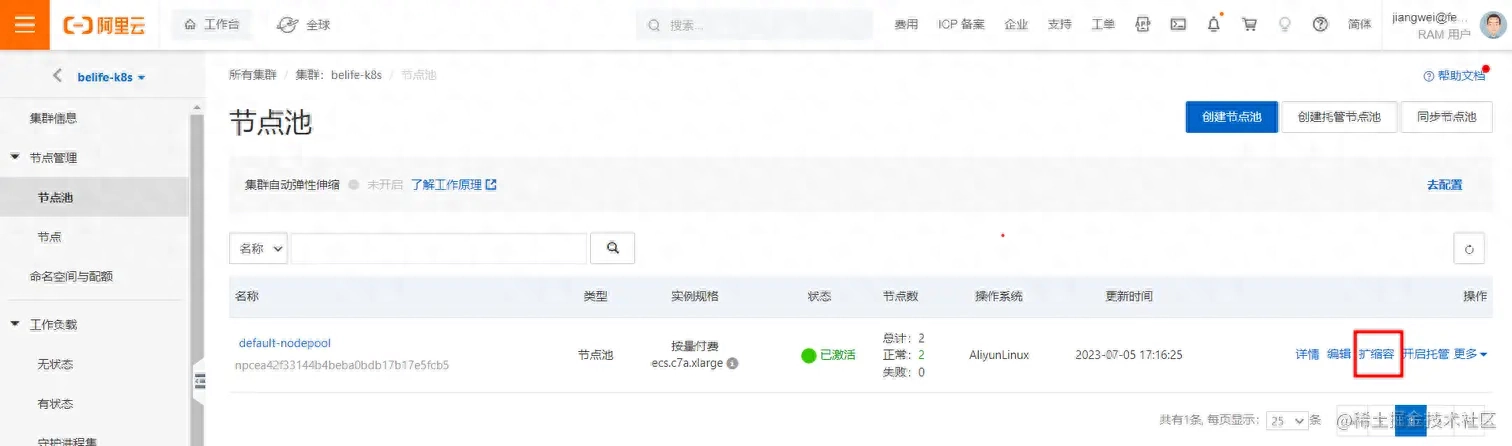

购置新节点redhat 集群文件系统,节点扩容,根据我们昨天的配置,起码2个以上节点能够支撑整个集群:

阿里云会手动根据我们上面预设的ECS配置订购节点,如想变更可以在这儿设置:

9.安装kubectrl

我们回到自己的发布布署机器,回到根目录:

bash复制代码curl -LO "https://dl.k8s.io/release/$(curl -L -s https://dl.k8s.io/release/stable.txt)/bin/linux/amd64/kubectl"

curl -LO "https://dl.k8s.io/$(curl -L -s https://dl.k8s.io/release/stable.txt)/bin/linux/amd64/kubectl.sha256"

echo "$(cat kubectl.sha256) kubectl" | sha256sum --check

sudo install -o root -g root -m 0755 kubectl /usr/local/bin/kubectl

kubectl version --short

# Client Version: v1.27.3

# Kustomize Version: v5.0.1

# Server Version: v1.22.15-aliyun.1

# WARNING: version difference between client (1.27) and server (1.22) exceeds the supported minor version skew of +/-1

步入集群查看联接信息,将阿里云白色部份信息(网段或外网)储存到我们的布署机器:

bash复制代码mkdir -p /root/.kube

cd /root/.kube

vim config

# 黑色部分的内容复制进去

kubectl cluster-info

# Kubernetes control plane is running at https://172.16.214.227:6443

# metrics-server is running at https://172.16.214.227:6443/api/v1/namespaces/kube-system/services/heapster/proxy

# KubeDNS is running at https://172.16.214.227:6443/api/v1/namespaces/kube-system/services/kube-dns:dns/proxy

# To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

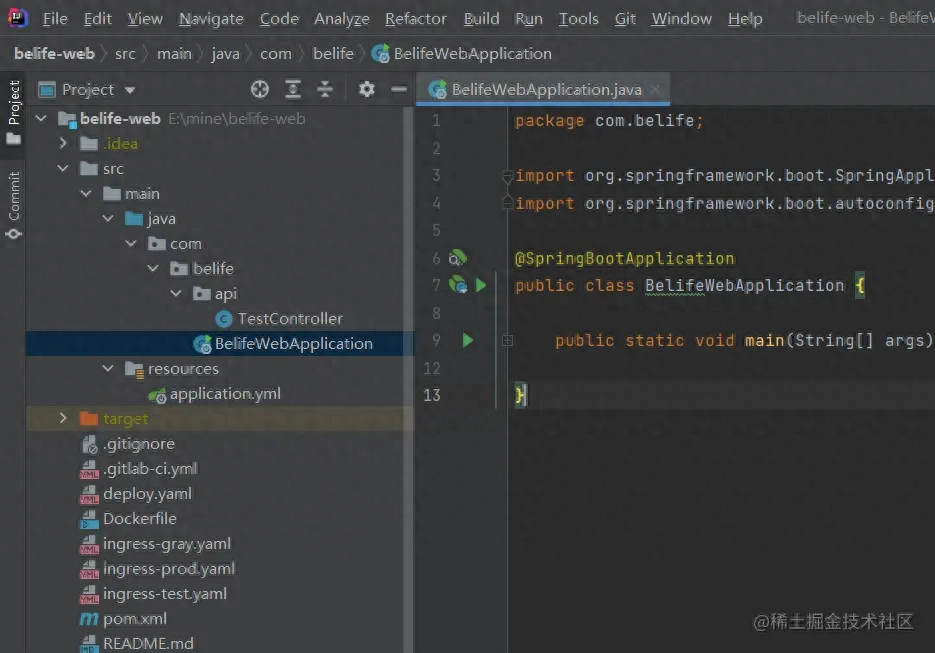

10.新建一个SpringBoot项目

注意项目的maven配置,和刚刚机器上的一样,联接至我们搭建好的nexus私服:

pom.xml

xml复制代码

4.0.0

org.springframework.boot

spring-boot-starter-parent

2.7.0

com.belife

belife-web

1.0.0-SNAPSHOT

UTF-8

1.8

1.8

org.springframework.boot

spring-boot-starter

org.springframework.boot

spring-boot-starter-web

org.springframework.boot

spring-boot-starter-test

test

belife-web

org.springframework.boot

spring-boot-maven-plugin

org.apache.maven.plugins

maven-compiler-plugin

${maven.compiler.source}

${maven.compiler.target}

application.yml

yml复制代码server:

port: 8080

spring:

application:

name: belife-web

profiles:

active: dev

BelifeWebApplication.java

java复制代码package com.belife;

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.SpringBootApplication;

@SpringBootApplication

public class BelifeWebApplication {

public static void main(String[] args) {

SpringApplication.run(BelifeWebApplication.class, args);

}

}

TestController.java

java复制代码package com.belife.api;

import org.springframework.beans.factory.annotation.Value;

import org.springframework.web.bind.annotation.RequestMapping;

import org.springframework.web.bind.annotation.RequestMethod;

import org.springframework.web.bind.annotation.RestController;

@RestController

@RequestMapping("/v1")

public class TestController {

@Value("${spring.profiles.active}")

private String env;

@RequestMapping(value = "/heartbeat", method = {RequestMethod.GET})

public String heartbeat() {

return "OK";

}

@RequestMapping(value = "/app/test", method = {RequestMethod.GET, RequestMethod.POST})

public String test() {

return "This is a test, env: " + env;

}

}

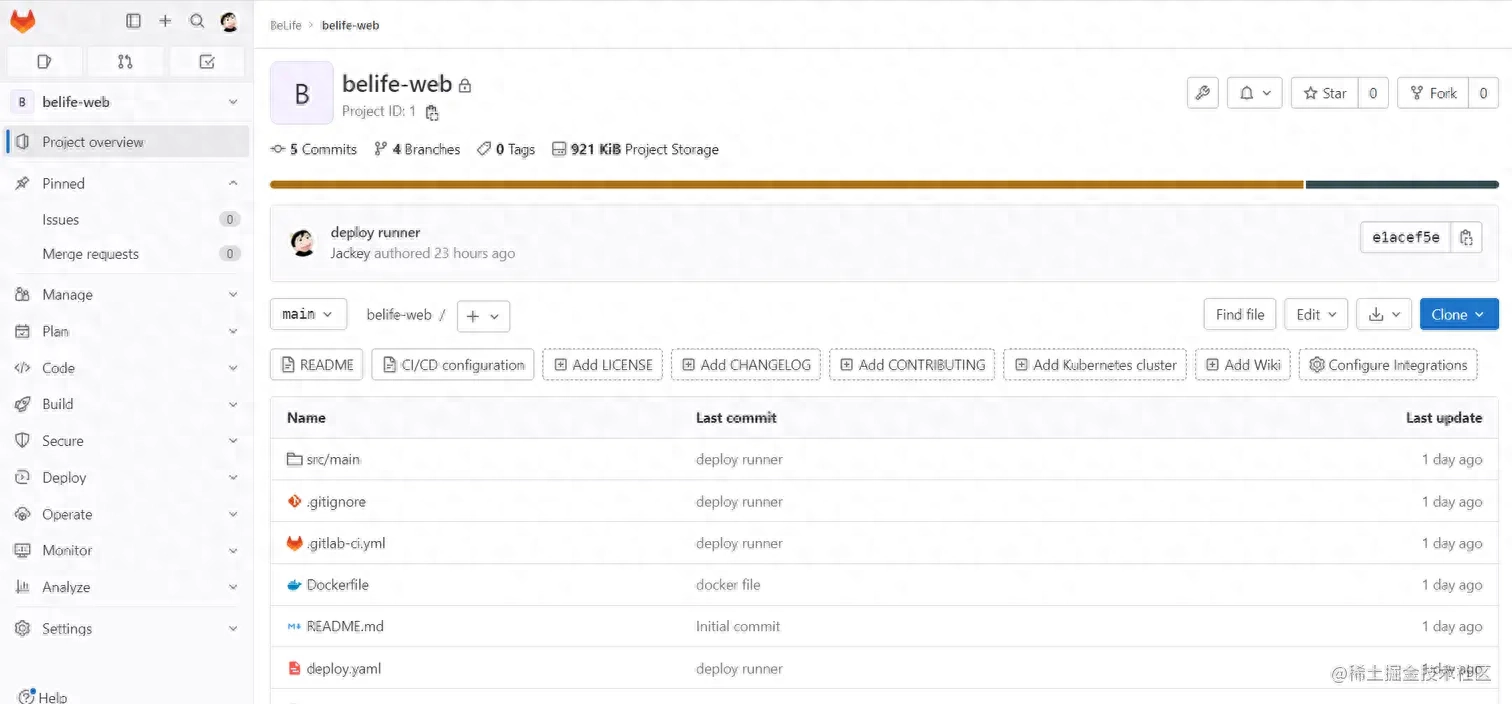

将项目递交到我们的自己搭建的gitlab上面:

11.编撰CI/CD相关的脚本

Dockerfile(这儿的内容不做过多解释,觉得应当是程序员的必备技能)

bash复制代码FROM azul/zulu-openjdk-centos:8

ENV LANG en_US.UTF-8

ENV LC_ALL en_US.UTF-8

ENV LANGUAGE en_US:en

ENV TZ=Asia/Shanghai

RUN ln -snf /usr/share/zoneinfo/$TZ /etc/localtime && echo $TZ > /etc/timezone

ADD ./target/belife-web.jar /srv

WORKDIR /srv

CMD java ${JAVA_OPTS} -jar /srv/belife-web.jar

.gitlab-ci.yml

这儿我们定义了3个流程步骤:

package主要是maven打包相关;build主要是docker镜像打包push相关;deploy主要是发布到阿里云k8s集群,涉及的yaml资源清单后续会给出;

yaml复制代码stages:

- package

- build

- deploy

variables:

NAMESPACE: belife-${CI_COMMIT_BRANCH}

NODE_LABELS: deploy-${CI_COMMIT_BRANCH}

REGISTRY_NAME: "registry.ap-southeast-1.aliyuncs.com/belife"

PROJECT_NAME: ${CI_PROJECT_NAME}-${CI_COMMIT_BRANCH}

IMAGE_NAME: ${REGISTRY_NAME}/${PROJECT_NAME}

DEPLOY_NAME: ${PROJECT_NAME}

SERVICE_NAME: service-${PROJECT_NAME}

INGRESS_NAME: ingress-${PROJECT_NAME}

LOG_STORE_OUT: aliyun_logs_${PROJECT_NAME}

LOG_STORE_INIT: aliyun_logs_${PROJECT_NAME}_logstore

K8S_WORKER_ROLE: KubernetesWorkerRole-437a599e-7662-45d9-876e-ba8f9324a73d

PORT: "80"

HEALTH_URL: "/v1/heartbeat"

JAVA_OPTS: "-Dspring.profiles.active=${CI_COMMIT_BRANCH} -Dserver.port=${PORT}

-Djava.awt.headless=true -Djava.net.preferIPv4Stack=true -Dfile.encoding=utf8

-Xms1024m -Xmx1024m -XX:MetaspaceSize=256M -XX:MaxNewSize=512m -XX:MaxMetaspaceSize=512m

-Dram.role.name=${K8S_WORKER_ROLE}"

cache: &global_cache

key: ${CI_COMMIT_BRANCH}

paths:

- "target/${CI_PROJECT_NAME}.jar"

maven-package:

stage: package

tags:

- runner-k8s

only:

- test@belife/belife-web

- gray@belife/belife-web

- prod@belife/belife-web

cache:

<<: *global_cache

policy: push

script:

- mvn clean package -Dmaven.test.skip=true

docker-build:

stage: build

tags:

- runner-k8s

only:

- test@belife/belife-web

- gray@belife/belife-web

- prod@belife/belife-web

cache:

<<: *global_cache

policy: pull

script:

- docker build -t ${IMAGE_NAME}:$CI_COMMIT_SHORT_SHA .

- docker push ${IMAGE_NAME}:$CI_COMMIT_SHORT_SHA

k8s-deploy-test:

stage: deploy

tags:

- runner-k8s

only:

- test@belife/belife-web

cache: {}

script:

- envsubst < deploy.yaml | cat -

- envsubst < deploy.yaml | kubectl apply -f -

- envsubst < ingress-test.yaml | cat -

- envsubst < ingress-test.yaml | kubectl apply -f -

- kubectl rollout status deployment/$DEPLOY_NAME -n $NAMESPACE

variables:

REPLICAS: 1

k8s-deploy-gray:

stage: deploy

tags:

- runner-k8s

only:

- gray@belife/belife-web

cache: {}

script:

- envsubst < deploy.yaml | cat -

- envsubst < deploy.yaml | kubectl apply -f -

- envsubst < ingress-gray.yaml | cat -

- envsubst < ingress-gray.yaml | kubectl apply -f -

- kubectl rollout status deployment/$DEPLOY_NAME -n $NAMESPACE

variables:

REPLICAS: 1

NAMESPACE: belife-prod

k8s-deploy-prod:

stage: deploy

tags:

- runner-k8s

only:

- prod@belife/belife-web

cache: {}

script:

- envsubst < deploy.yaml | cat -

- envsubst < deploy.yaml | kubectl apply -f -

- envsubst < ingress-prod.yaml | cat -

- envsubst < ingress-prod.yaml | kubectl apply -f -

- kubectl rollout status deployment/$DEPLOY_NAME -n $NAMESPACE

variables:

REPLICAS: 2

when: manual

我们通过variables定义了顶楼的环境变量,这种环境变量除了能在.gitlab-ci.yml中使用,能够在k8s相关的yaml资源清单文件中使用;tag就是我们刚刚设置的gitlab-runner的tag叫runner-k8s来执行;

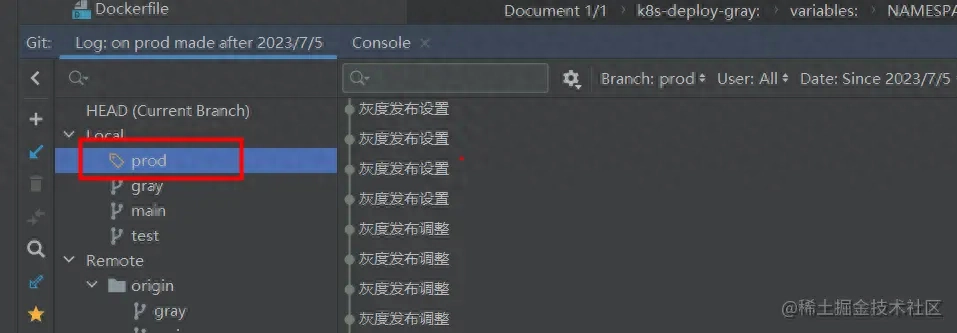

{CI_PROJECT_NAME}和{CI_COMMIT_BRANCH}是gitlab-runner的外置变量,{CI_PROJECT_NAME}就是belife-web,{CI_COMMIT_BRANCH}就是分支名称,我们通过test,gray,prod分支的递交,触发对应环境的deploy发布动作;gray,prod灰度和生产必须坐落同一个namespace,test和gray我们只启动1个容器实例,prod我们设置启动2个容器实例,但是设置deploy动作为manual自动执行。

yml复制代码apiVersion: apps/v1

kind: Deployment

metadata:

name: $DEPLOY_NAME # Pod名称称

namespace: $NAMESPACE # 命名空间

spec:

replicas: $REPLICAS # 分片个数

revisionHistoryLimit: 3

selector:

matchLabels:

app: $CI_PROJECT_NAME

template:

metadata:

labels:

app: $CI_PROJECT_NAME

spec:

restartPolicy: Always

containers:

- name: $CI_PROJECT_NAME

image: ${IMAGE_NAME}:$CI_COMMIT_SHORT_SHA # 镜像名称

imagePullPolicy: Always # 总是拉取镜像

resources:

limits: # 资源限制

cpu: 500m

memory: 2000Mi

requests: # 所需资源

cpu: 200m

memory: 1200Mi

ports:

- name: http

containerPort: $PORT # 端口设置

env:

- name: JAVA_OPTS # 环境变量

value: $JAVA_OPTS

- name: $LOG_STORE_OUT # 阿里云SLS相关

value: stdout

- name: $LOG_STORE_INIT # 阿里云SLS相关

value: $PROJECT_NAME

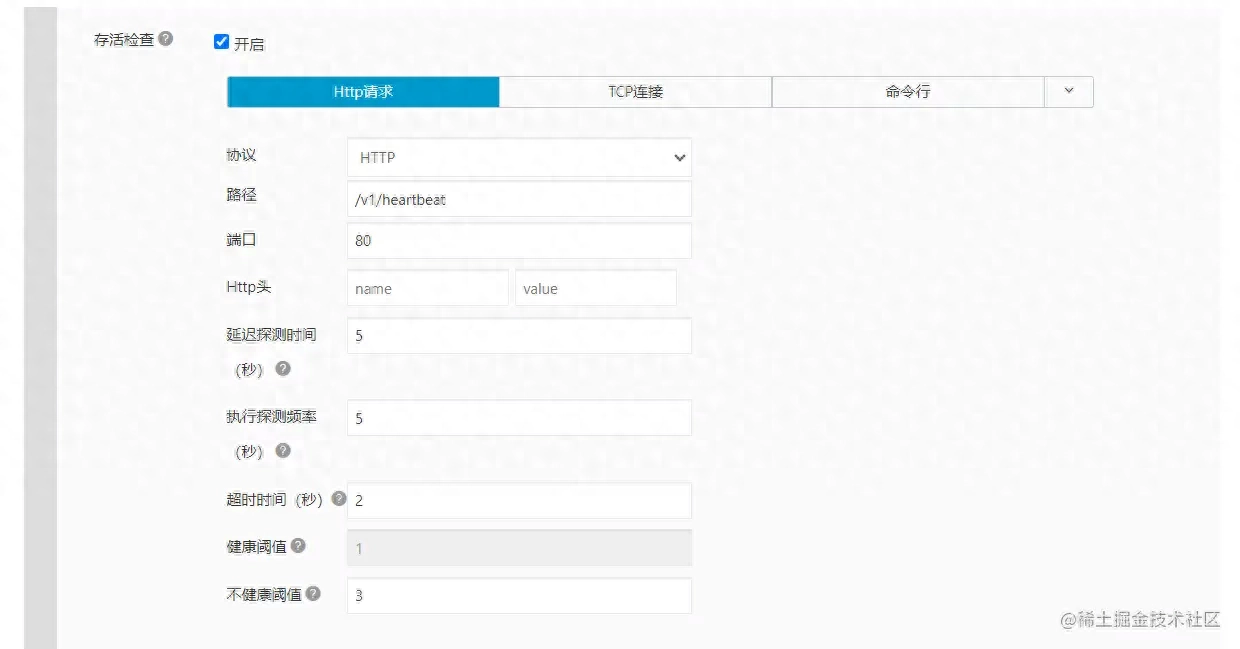

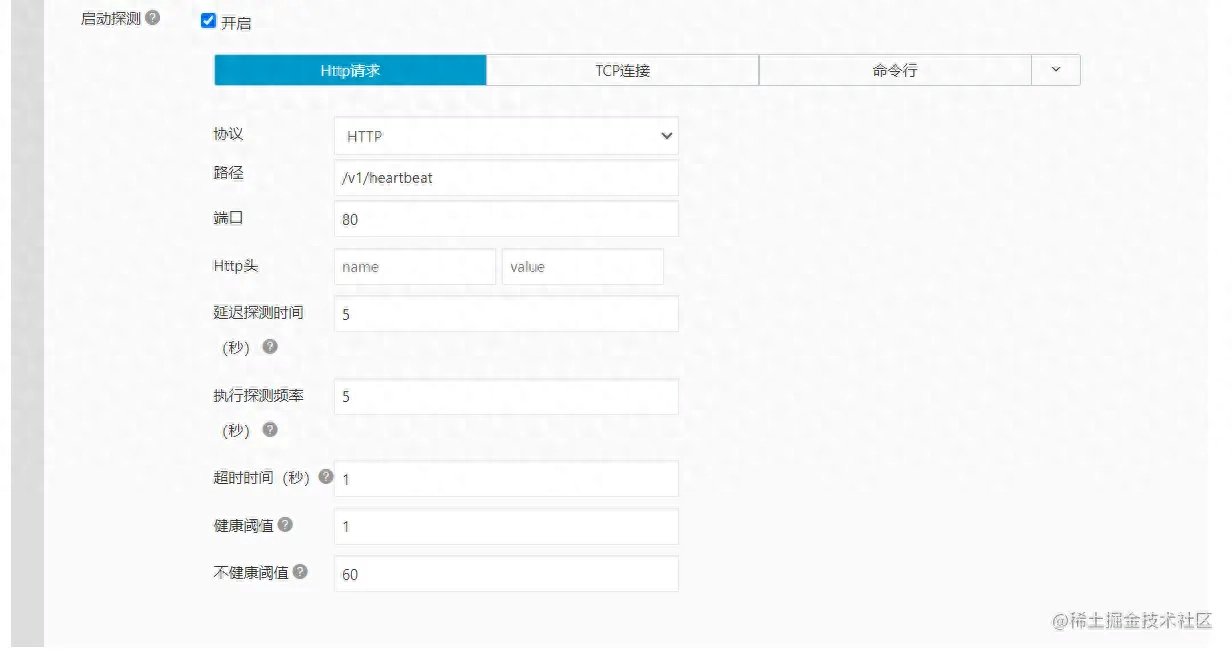

startupProbe: # 启动检查探针

httpGet:

path: $HEALTH_URL

port: $PORT

scheme: HTTP

initialDelaySeconds: 5

timeoutSeconds: 1

periodSeconds: 5

successThreshold: 1

failureThreshold: 60

livenessProbe: # 存活检查探针

httpGet:

path: $HEALTH_URL

port: $PORT

scheme: HTTP

initialDelaySeconds: 5

timeoutSeconds: 2

periodSeconds: 5

successThreshold: 1

failureThreshold: 3

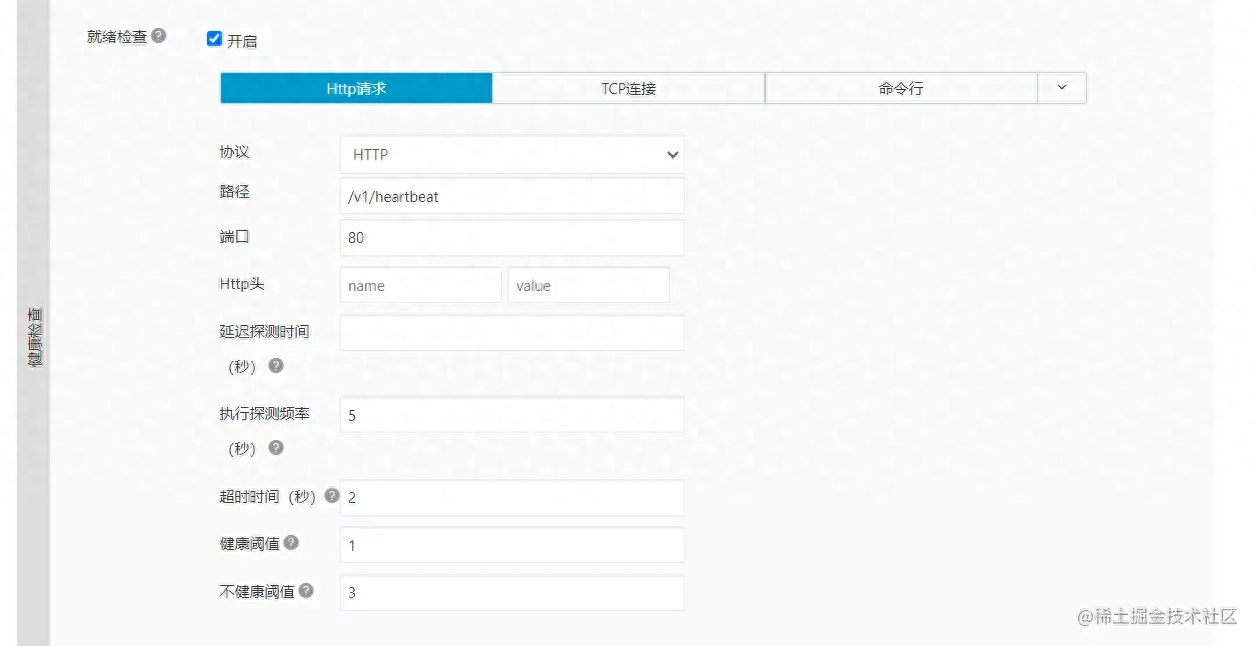

readinessProbe: # 就绪检查探针

httpGet:

path: $HEALTH_URL

port: $PORT

scheme: HTTP

timeoutSeconds: 2

periodSeconds: 5

successThreshold: 1

failureThreshold: 3

affinity:

nodeAffinity: # 节点亲和性,软亲和性

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 1

preference:

matchExpressions:

- key: node.labels.deploy

operator: NotIn # 防止分片启动到同一个节点

values:

- $NODE_LABELS

---

apiVersion: v1

kind: Service

metadata:

name: $SERVICE_NAME

namespace: $NAMESPACE

spec:

selector:

app: $CI_PROJECT_NAME

type: ClusterIP

ports:

- port: $PORT

targetPort: $PORT

name: $CI_PROJECT_NAME

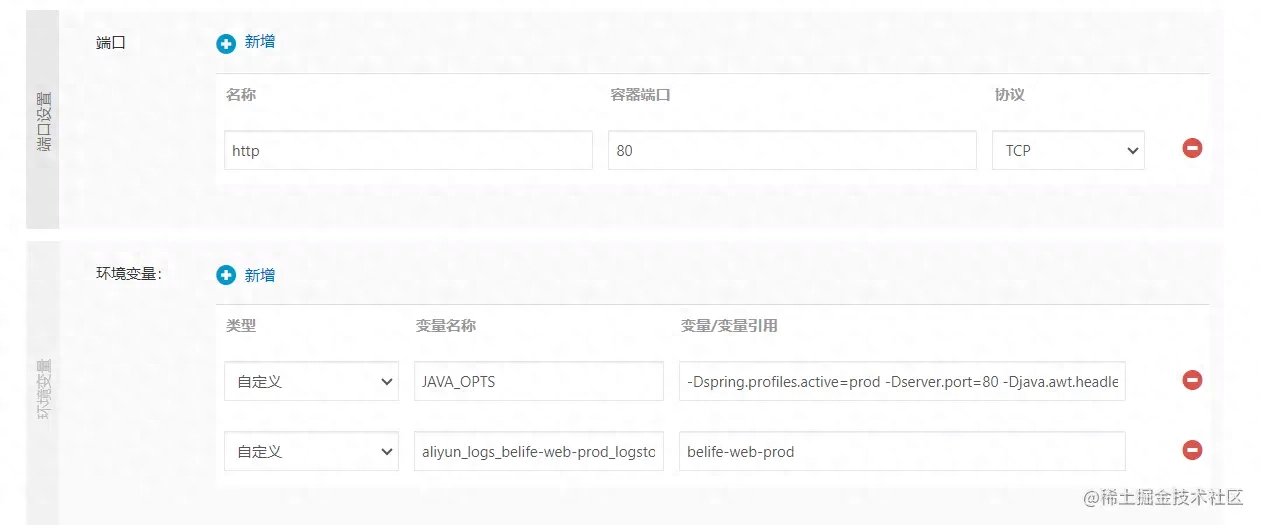

截取发布后的几个图,便捷你们对照注释理解:

不同环境的nginx-ingress路由配置,这儿我们专门做了灰度发布的教程,注意gray和prod除了坐落同一个命名空间,路由配置也是同一个,发布后会动态覆盖实现不同疗效,后文会具体阐明;

ingress-test.yaml

yml复制代码apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: $INGRESS_NAME

namespace: $NAMESPACE

annotations:

kubernetes.io/ingress.class: "nginx"

spec:

rules:

- host: test.home.belifeapp.net

http:

paths:

- path: /v1

pathType: Prefix

backend:

service:

name: ${SERVICE_NAME}

port:

number: $PORT

ingress-prod.yaml

yml复制代码apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress-${CI_PROJECT_NAME}-gray-prod

namespace: $NAMESPACE

annotations:

kubernetes.io/ingress.class: "nginx"

spec:

rules:

- host: home.belifeapp.net

http:

paths:

- path: /v1

pathType: Prefix

backend:

service:

name: ${SERVICE_NAME}

port:

number: $PORT

ingress-gray.yaml

yml复制代码apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress-${CI_PROJECT_NAME}-gray-prod

namespace: $NAMESPACE

annotations:

kubernetes.io/ingress.class: "nginx"

nginx.ingress.kubernetes.io/service-match: |

service-${CI_PROJECT_NAME}-gray: header("gray", true)

nginx.ingress.kubernetes.io/service-weight: |

service-${CI_PROJECT_NAME}-gray: 30, service-${CI_PROJECT_NAME}-prod: 70

spec:

rules:

- host: home.belifeapp.net

http:

paths:

- path: /v1

pathType: Prefix

backend:

service:

name: service-${CI_PROJECT_NAME}-gray

port:

number: $PORT

- path: /v1

pathType: Prefix

backend:

service:

name: service-${CI_PROJECT_NAME}-prod

port:

number: $PORT

12.发布到测试环境

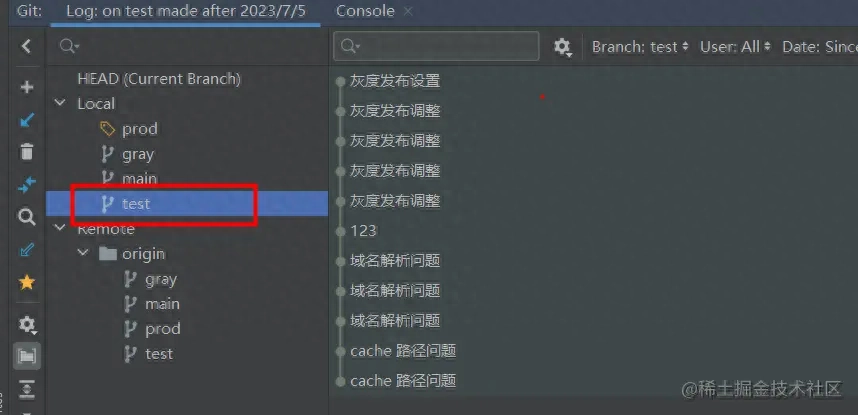

新建一个名为test的分支递交或则合并,即可触发发版流程:

我们步入gitlab,选择自己的项目Build->Pipelines即可见到执行过程和日志:

我们看见布署完成后,去集群命名空间里看下是否OKlinux下socket编程,点击编辑能够听到上面的Pod截图信息:

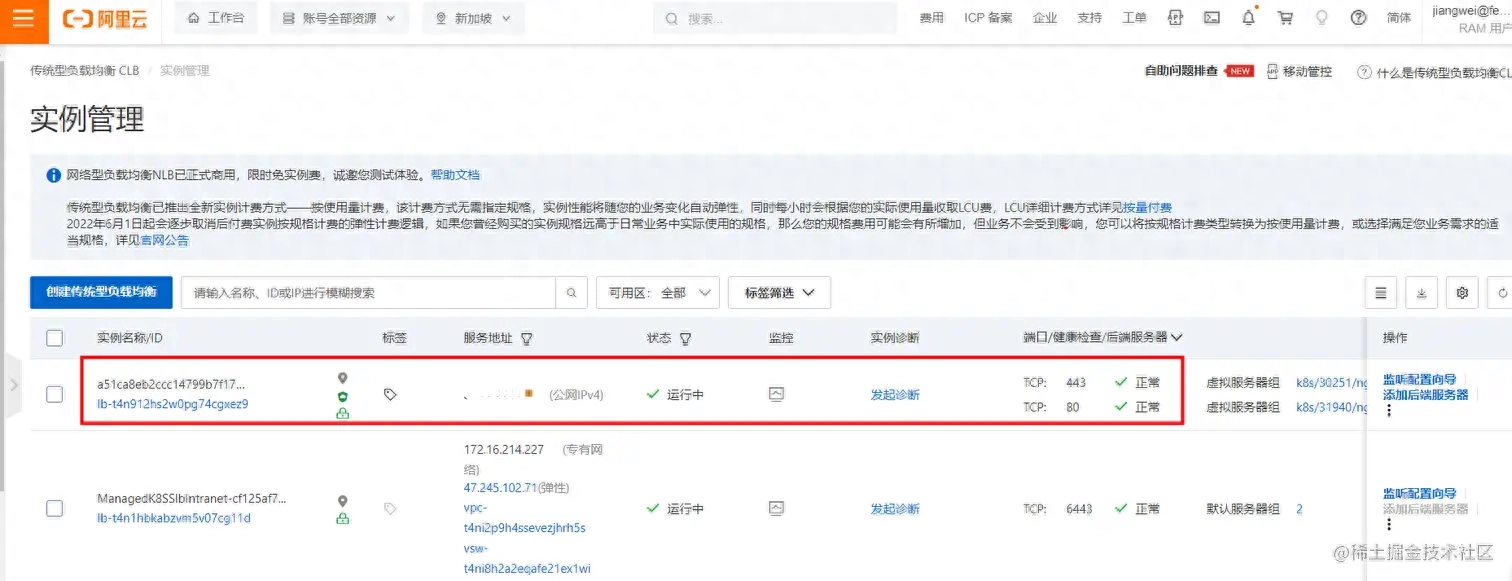

查看阿里云购置k8s集群手动为我们配置的SLB负载均衡服务,另一个是api-server的,可以从我们的集群资源列表直接点击链接步入:

网段IP我图中涂掉了,去阿里云域名服务,配置域名解析到这个网段IP:

浏览器试试地址:/v1/app/test

bash复制代码This is a test, env: test

13.发布到生产环境

生产环境的发布和测试环境类似,发布完成后我们看下是否OK:

浏览器试试地址:/v1/app/test

bash复制代码This is a test, env: prod

14.灰度发布(蓝绿发布)

我们会将belife-web-gray和belife-web-prod发布到同一个命名空间belife-prod,通过nginx-ingress动态切换流量;

不晓得诸位是否忘掉上面的ingress-gray.yaml中有下面几条配置:

bash复制代码 annotations:

kubernetes.io/ingress.class: "nginx"

nginx.ingress.kubernetes.io/service-match: |

service-${CI_PROJECT_NAME}-gray: header("gray", true)

nginx.ingress.kubernetes.io/service-weight: |

service-${CI_PROJECT_NAME}-gray: 30, service-${CI_PROJECT_NAME}-prod: 70

假如恳求头Http-header中带有gray:true的头信息,则流量全部分配到belife-web-gray灰度环境服务,即便便捷测试;假如恳求头不满足要求linux 删除文件夹,则流量启动切分三七开,30%流向belife-web-gray灰度环境,70%流向belife-web-prod线带环境,其实比率你自己设置;

浏览器试试地址:/v1/app/test

bash复制代码This is a test, env: gray # 30%概率

This is a test, env: prod # 70%概率

灰度验证完成,再度发布生产环境

悉心的诸位是否早已发觉,在上面ingress-gray.yaml和ingress-prod.yaml中,ingress路由名子都是同一个name:ingress-belife-web-gray-prod,这意味着哪些?

再来看下ingress-prod.yaml

yml复制代码apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress-${CI_PROJECT_NAME}-gray-prod

namespace: $NAMESPACE

annotations:

kubernetes.io/ingress.class: "nginx"

spec:

rules:

- host: home.belifeapp.net

http:

paths:

- path: /v1

pathType: Prefix

backend:

service:

name: ${SERVICE_NAME}

port:

number: $PORT

这代表着,假如我再度发布生产环境,原来灰度的名为ingress-belife-web-gray-prod的路由规则会被覆盖redhat 集群文件系统,由于本身灰度和生产用的同一个路由配置名,kubectrlapply命令会覆盖更新;当测试验证完灰度环境后,所有的流向将100%切换到belife-web-prod线带环境。

其实灰度发布的做法好多,男子伴们可以自己探求,作者这儿只是简单的给出一个演示案例。